The Challenges of Scaling AI Models in Production

Mastering the Challenges of Scaling AI Models in Production 2025

Key Takeaways

Scaling AI models in production today demands a smarter, more adaptable approach that balances performance, cost, and security. These insights will help you optimize AI deployments for real-world success without overwhelming your resources or infrastructure.

- Marginal gains come with bigger models; expect only slight improvements despite massive compute, so focus on efficient tuning and model pruning to maximize performance.

- Integrate AI seamlessly by designing architectures that mesh with diverse legacy systems and real-time data flows to avoid costly operational bottlenecks and compliance risks.

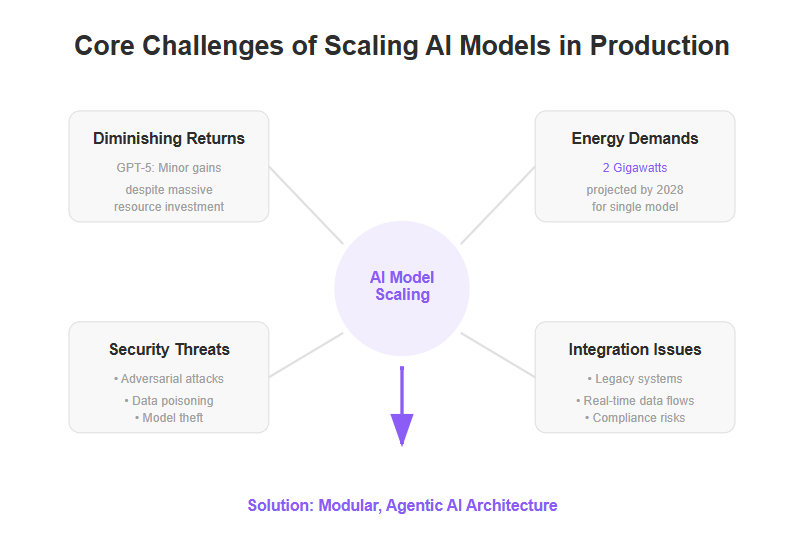

- Plan for skyrocketing energy needs; training large AI models could consume up to 2 gigawatts by 2028, so adopt energy-efficient algorithms and green cloud platforms to cut costs and emissions.

- Combat expanding AI security risks with dynamic, adaptive frameworks that address adversarial attacks, data poisoning, and model theft through real-time threat detection and cross-team collaboration.

- Overcome production bottlenecks like latency and throughput by leveraging distributed computing, load balancing, and optimized data pipelines for smoother, scalable performance.

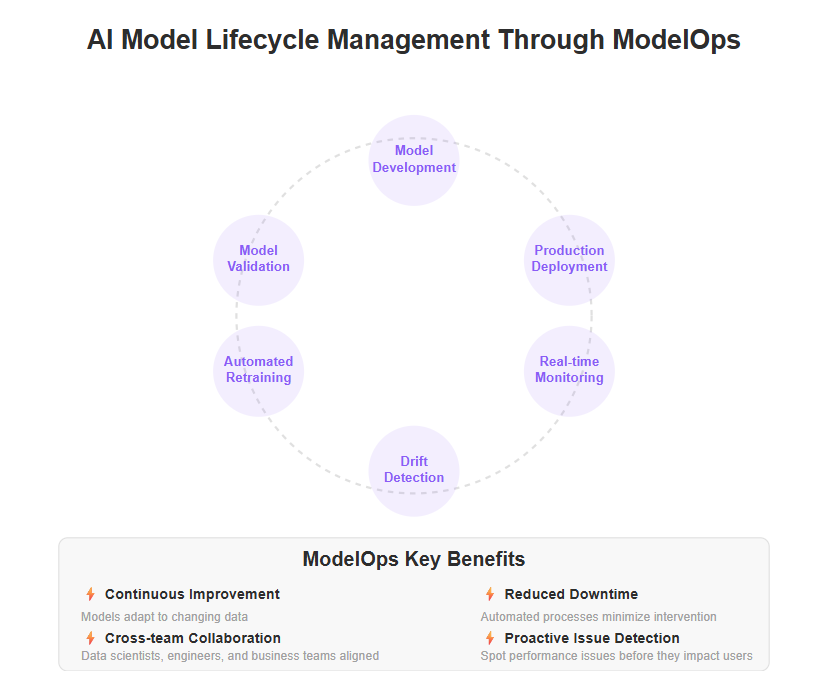

- Embed continuous ModelOps practices including automated retraining and drift detection to keep AI models fresh and reliable amidst evolving data and user behavior.

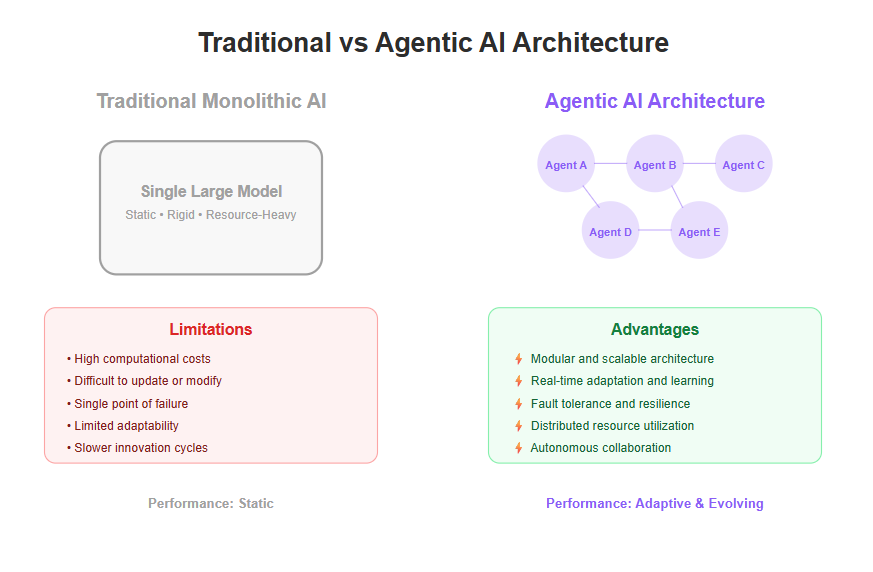

- Shift to modular, agentic AI architectures where autonomous agents collaborate and self-correct, enabling real-time adaptability and fault tolerance over brittle monolithic models.

- Future-proof your AI roadmap by embracing scalable infrastructure and resilient design that enables secure, flexible AI growth without sacrificing speed or stability.

Master these strategies to build AI systems that scale smartly—balancing innovation with operational savvy to unlock real business impact in 2025 and beyond. Dive into the full article to learn how to put these principles into action.

Introduction

Imagine investing massive resources into an AI model only to see minimal performance gains—a frustrating reality many teams face in 2025. The old playbook of “bigger models, more compute” no longer guarantees success.

Scaling AI in production today isn’t just a tech upgrade; it’s a complex puzzle involving operational hurdles, skyrocketing energy demands, and intensifying security risks. Many startups and SMBs feel stuck between ambitious AI goals and practical deployment challenges.

Involving business leaders early in AI projects is essential to ensure alignment with business objectives and to secure the necessary support and funding for long-term success.

What if there was a smarter way to scale—one that prioritizes flexibility, efficiency, and resilience over brute force? This approach shifts the focus from sheer power to modular architectures, seamless integration, and proactive lifecycle management.

You’ll discover how to navigate:

- The hidden costs behind scaling AI models, including energy and security

- Practical strategies for tuning and optimizing performance without waste

- The importance of scalable infrastructure and real-time governance

- Emerging trends like agentic AI that promise adaptable, autonomous solutions

Many AI projects struggle to move beyond the proof of concept stage due to a lack of strategic alignment and clearly defined business objectives.

These insights aren’t just theoretical—they’re grounded in real-world experience helping businesses like yours bridge AI ambitions with operational reality.

By rethinking what “scale” means and embracing agility, you can build AI systems that grow smarter and more reliable as demands evolve.

Let’s start by unpacking the core challenges that often trip up production AI deployments, setting the stage for proven strategies to overcome them.

Understanding the Core Challenges of Scaling AI Models in Production

Scaling AI models in 2025 is no longer just about beefing up compute power or increasing model size. The landscape has shifted, and simply throwing more resources at AI often leads to diminishing returns. For example, OpenAI’s GPT-5 showed only minor improvements over its predecessor despite massive resource investments, signaling a plateau in raw scaling benefits. To address these challenges, organizations must implement robust AI governance frameworks to ensure ethical deployment, regulatory compliance, and responsible scaling of AI solutions.

Operational Complexity and Resource Demands

Deploying AI at scale inside complex enterprise workflows is a whole different beast. Integration means juggling:

- Diverse legacy systems

- Real-time data flows

- Compliance and governance

Strong data governance and data management practices are essential for ensuring data quality, security, and compliance across enterprise systems, supporting scalable and effective AI deployment.

All while ensuring AI logic doesn’t become a bottleneck or black box.

On top of this, the resource intensiveness of large-scale AI is staggering. Training a single state-of-the-art model could require up to 2 gigawatts of electricity by 2028, fueling environmental and cost concerns. Efficiently managing data and optimizing model efficiency are critical for sustainable scaling and minimizing operational bottlenecks (AI training to have whopping power needs, report says).

Security Risks and New Architectural Needs

As AI permeates production environments, security vulnerabilities multiply. Hackers exploit adversarial inputs, data poisoning, and model theft. CISOs growingly worry about these expanding attack surfaces, demanding proactive, adaptive security frameworks (I am a chief security officer and here’s why I think AI Cybersecurity has only itself to blame for the huge problem that’s coming). Ensuring regulatory compliance and robust data security is critical for protecting AI systems, maintaining trust, and meeting evolving legal standards.

These challenges push enterprises to rethink static monolithic AI models. Instead, the focus is shifting toward modular, adaptable AI systems—think of AI as a living network of collaborating agents rather than one giant, rigid brain. This flexibility improves resilience, scalability, and governance (The enterprise AI paradox: why smarter models alone aren’t the answer). Adopting a phased approach to production deployment, moving from pilot to full rollout, helps mitigate risks and supports continuous improvement.

Key Takeaways You Can Use Today

- Expect marginal performance gains when scaling large AI models; more compute isn’t always better.

- Prioritize AI architecture that integrates seamlessly with your existing systems to avoid operational overload.

- Prepare for both energy costs and security risks upfront by adopting modular, secure AI frameworks.

Picture this: instead of wrestling with one massive model dragging down your infrastructure, imagine a fleet of smart, autonomous AI agents smoothly handling tasks, adapting on the fly, and staying secure—even under pressure.

Scaling AI in 2025 demands a mindset shift from “bigger is better” to “smarter and more flexible.” This approach optimizes resources, cuts risks, and positions your AI for real-world deployment success.

Strategic Foundations for Optimizing AI Model Performance at Scale

Best Practices in AI Model Tuning and Resource Management

Scaling AI isn’t just about throwing more compute at a problem — efficiency beats brute force every time. Start by focusing on model tuning techniques that squeeze out maximum performance without next-level resource demands.

Key tactics include:

- Parameter tuning to find that sweet spot of accuracy versus resource cost

- Pruning, cutting away redundant model weights to reduce complexity

- Quantization methods that shrink models while retaining key signals

Robust model development and model training processes are essential for maintaining high-performing machine learning models at scale, ensuring ongoing accuracy and adaptability as data and requirements evolve.

Clean, consistent, high-quality data acts like the “fuel” for these optimizations. Garbage in, garbage out still rules — your models need a solid data foundation to stay sharp at scale.

Picture tuning a race car engine: you don’t just add horsepower, you adjust every part to go faster on less fuel. That’s how scalable AI works.

“Scaling smarter means tuning sharper, not just building bigger models.”

For a deeper dive, check out “7 Proven Strategies for Optimizing AI Model Performance at Scale” which breaks down these tactics in detail.

Overcoming Technical Barriers in Production Environments

Deploying models in the real world brings a fresh set of hurdles. You’ll face bottlenecks like latency, limited throughput, and infrastructure ceilings that can throttle performance below expectations.

Challenges span from cloud servers down to edge devices, with network variability and hardware limits squeezing your pipeline.

Common production pain points include:

Latency spikes causing slow responses in user-facing apps

Throughput caps restricting how many requests an AI system can handle concurrently

Infrastructure constraints from costly GPUs to bandwidth bottlenecks

Complex data pipelines that introduce delays or data inconsistency

Ensuring reliable AI deployment requires ongoing model monitoring to detect issues such as model drift, bias, and performance degradation, helping maintain consistent and effective operations in production.

Tackling these barriers calls for architecture tweaks and smarter workflows:

- Adopt distributed computing with load balancing to spread workloads evenly

- Optimize data pipelines for streamlined, consistent input delivery

- Use caching and batching to reduce repetitive computations

Understanding model behavior and model execution is essential for diagnosing and resolving production issues, as it provides insight into how AI models process inputs and perform within the system.

Imagine your AI deployment like a crowded kitchen during dinner service — efficient prep, organized stations, and clear communication prevent chaos and keep orders moving fast.

“Address the bottlenecks early to avoid expensive slowdowns at scale.”

Refer to “5 Critical Technical Barriers to Scaling AI Models in Production” for more on overcoming these real-world obstacles.

Mastering these strategic foundations transforms bulky, resource-heavy AI into agile, scalable solutions ready for production — efficient tuning and smart infrastructure win the day.

Implementing Scalable Infrastructure Solutions for Robust Production AI

Cloud Platforms and Distributed Computing Frameworks

Scaling AI in production demands flexible, cost-efficient cloud infrastructure that can pool resources and adapt on demand.

Here’s why cloud platforms are the go-to:

- Elastic scalability lets you spin up or down compute power instantly.

- Distributed training frameworks break down massive AI models to run in parallel, speeding up learning (Scalable, Distributed AI Frameworks: Leveraging Cloud Computing for Enhanced Deep Learning Performance and Efficiency).

- Load balancing ensures no single server gets overwhelmed, smoothing performance peaks.

- Auto-scaling and orchestration tools keep your system humming by managing workloads and uptime automatically.

Edge AI extends scalable AI and machine learning capabilities to low-power, resource-constrained devices, reducing latency and improving efficiency in real-world applications.

Picture your production AI as a well-oiled orchestra — cloud tools coordinate each instrument to play perfectly together, no matter how complex the score.

In fact, studies predict that by 2028, training a single large AI model could consume up to 2 gigawatts of electricity, illustrating the need for efficient infrastructure strategies now (AI training to have whopping power needs, report says).

Continuous integration practices streamline deployment and maintenance of distributed AI systems, ensuring stability and rapid iteration as models and datasets evolve.

For a deep dive into setup tips and vendor options, check out Transform Production AI with Scalable Infrastructure Solutions.

Energy Efficiency and Environmental Considerations

AI’s growing footprint isn’t just financial — it’s environmental (Environmental impact of artificial intelligence).

Training large models is energy-intensive, contributing to significant carbon emissions. But the industry is pushing back with:

- Energy-efficient algorithms that reduce compute without sacrificing accuracy.

- Green data centers powered by renewables cutting the carbon cost.

- New hardware optimized for low-power AI inference.

Maintaining data integrity and continuously monitoring data patterns are also crucial for ensuring efficient and accurate AI operations, as they help safeguard data quality and adapt to evolving trends.

Balancing performance and sustainability isn’t optional — it’s becoming a business imperative. Imagine the difference if your AI deployments ran on clean energy with smart algorithms shaving off waste.

Practical steps you can start applying today include:

Prioritize vendors using renewable-powered data centers.

Tune your models for efficiency with pruning and quantization.

Monitor energy consumption alongside performance metrics.

These strategies align your AI pipeline with sustainability trends and regulatory expectations, future-proofing production environments.

Think of it like upgrading a gas guzzler to a hybrid — same horsepower, but way more efficient.

Scaling AI production means turning vast infrastructure into a finely tuned engine. Flexible cloud platforms paired with smart energy practices let you hit growth targets without breaking budgets or the planet.

Getting infrastructure right isn’t just tech savvy — it’s a competitive edge and a real-world commitment to building scalable, sustainable AI systems.

Strengthening AI Model Lifecycle Management Through ModelOps

Governance, Monitoring, and Continuous Improvement

ModelOps is the backbone of smooth AI deployment and maintenance. Think of it as the glue that keeps AI models aligned from development to production, ensuring they don’t just launch but keep performing well.

Key processes include:

- Automated retraining to refresh models as new data flows in

- Real-time monitoring for performance dips and unexpected behaviors

- Tight collaboration between data scientists, engineers, and business teams to prevent silos

Effective data governance is essential for maintaining data quality, security, and compliance throughout these processes.

These practices reduce surprises and help teams spot issues before they impact users.

Picture this: a model in production starts underperforming because the data it sees has changed subtly. Without monitoring, that decline could go unnoticed for weeks, costing time and revenue. Protecting customer data through robust governance and continuous monitoring is also critical to prevent breaches and ensure compliance.

For teams ready to level up, “Unlocking Seamless AI Model Deployment With Cutting-edge Automation” covers how CI/CD pipelines streamline this workflow, making updates faster and safer.

Managing AI Model Drift in Dynamic Production Environments

Models don’t stay fresh forever. Over time, data shifts, user behavior evolves, and environments change, causing what’s called model drift. In machine learning, if model drift is not properly managed, it can lead to performance degradation, where the accuracy and reliability of predictions decline over time.

To combat this, companies rely on:

Drift detection systems that flag when predictions start skewing

Version control to manage multiple model iterations easily

Adaptive retraining that fine-tunes models regularly without full redeployment

Operationalizing drift management isn’t optional—it’s critical for keeping AI relevant and reliable at scale.

Imagine a recommendation engine that stops matching customers’ current tastes because it hasn’t adapted to new trends. Drift detection can catch that mismatch early, triggering retraining that fixes recommendations before drop-offs occur.

For a deep dive, check out “The Essential Guide to Managing AI Model Drift in Production,” which offers practical tactics to stay ahead of these issues.

ModelOps turns AI scaling headaches into manageable steps by embedding governance, continuous monitoring, and adaptive retraining into your workflow. When you treat models like living products, not one-and-done projects, you unlock reliable, scalable AI that evolves alongside your business. Building this resilience upfront means less firefighting later and more confidence in your AI-powered decisions.

Enhancing Security and Resilience in Scalable AI Systems

Addressing Expanding Attack Surfaces and Emerging Threats

Scaling AI means widening the attack surface—more users, more data, more integration points. Common threats include:

- Adversarial attacks that manipulate inputs to cause AI errors

- Data poisoning aimed at corrupting training sets

- Model theft attempts to steal proprietary AI capabilities

AI-driven fraud detection systems play a crucial role in preventing security breaches and protecting sensitive data, especially in banking and financial sectors.

CISOs and enterprise leaders are sharpening their focus on these risks. Many see AI cybersecurity as an evolving battlefield, requiring agility instead of static defenses (I am a chief security officer and here’s why I think AI Cybersecurity has only itself to blame for the huge problem that’s coming).

The best defense? Deploy dynamic, adaptive security frameworks tailored for AI’s unique behaviors—like real-time threat detection and mitigation that adjusts as models learn and evolve.

Strong security roots in collaboration between AI teams and security experts. Imagine developers and defenders working side-by-side, spotting vulnerabilities early and patching them before damage occurs.

“AI’s complexity amplifies risk, but teamwork shrinks the window for attackers,” as one CISO recently noted.

Future-Proofing AI Deployments with Resilient Architectures

Building robust AI systems goes beyond walls and firewalls. The key is resilience through design:

- Incorporate redundancy and fault tolerance so that failures or attacks don’t bring down entire systems

- Use modular, agentic AI that can self-correct, isolate faults, and adapt in real-time

- Shift away from brittle monolithic models to autonomous AI agents that operate securely within enterprise ecosystems

Generative AI introduces both challenges and opportunities in building resilient and scalable AI architectures, as its advanced capabilities can drive innovation but also require significant computational resources and careful risk management.

Picture an AI system that reroutes workloads instantly if a node is compromised or automatically retrains a damaged component without downtime.

This emerging model of AI resilience supports scalable, secure growth that can keep pace with business demands.

For teams aiming to integrate these innovations, collaboration tools that synchronize development and security workflows become indispensable, accelerating safe deployments at scale.

Quotable insights:

- “AI security isn’t a one-and-done fix—it’s a continuous dance between attackers and defenders.”

- “Building AI resilience means expecting failure and designing systems that bounce back smarter.”

- “Collaboration between security and AI teams is your best bet against evolving threats.”

Picture this: your AI deployment detects a subtle data poisoning attempt, isolates the affected module, and triggers an automated retraining—all while keeping your app running smoothly. That’s the power of resilient, secure AI at scale.

Secure AI scales when architecture adapts and teams collaborate. Keeping security dynamic and distributed isn’t optional—it’s survival.

Benefits of AI at Scale

Scaling AI initiatives unlocks a host of advantages for organizations ready to move beyond pilot projects and into enterprise-wide transformation. By embracing scalable AI solutions, businesses can automate complex workflows, extract actionable insights from massive datasets, and accelerate their journey toward digital maturity. Whether you’re optimizing back-office operations or launching new AI-driven products, scaling AI initiatives can help you achieve greater efficiency, smarter decision-making, and a culture of continuous innovation.

Improved Efficiency

AI at scale is a game-changer for operational efficiency. By deploying scalable AI models across business functions, organizations can automate repetitive tasks, streamline end-to-end processes, and optimize how resources are allocated. For example, AI-driven chatbots can handle thousands of customer inquiries simultaneously, freeing up your team to focus on higher-value work. Predictive maintenance powered by AI models can anticipate equipment failures before they happen, reducing costly downtime and boosting productivity. With scalable AI, you minimize manual errors, increase throughput, and realize significant cost savings—all while empowering your workforce to focus on what matters most.

Enhanced Decision-Making

Scalable AI solutions empower organizations to make faster, more informed decisions by harnessing real-time data analysis. AI models can sift through vast amounts of information, uncover hidden patterns, and deliver predictive insights that drive data-driven decision making. Imagine being able to forecast demand shifts, optimize inventory, or detect fraud in real time—these are the kinds of competitive advantages that AI at scale delivers. By integrating scalable AI into your analytics stack, you can respond to market changes with agility, outpace competitors, and ensure every strategic move is backed by robust, data-driven intelligence.

Increased Innovation

When organizations embrace AI at scale, they open the door to continuous innovation. Scalable AI solutions make it possible to experiment with new business models, launch AI-powered applications, and tap into emerging technologies like natural language processing. This enables companies to create personalized customer experiences, develop new products, and even enter untapped markets. By fostering a culture where scalable AI is central to your innovation strategy, you position your business to stay ahead of industry trends, adapt quickly to changing customer needs, and drive sustainable growth.

AI in Various Industries: Real-World Applications and Impact

AI is rapidly transforming business operations across a wide range of industries, delivering measurable improvements in efficiency, decision-making, and innovation. From automating routine tasks to enabling entirely new business models, AI is reshaping how organizations operate and compete in the digital age.

Healthcare

In the healthcare sector, scalable AI models and solutions are revolutionizing patient care and operational workflows. AI-driven technologies are helping clinicians diagnose diseases more accurately and efficiently, using advanced computer vision to interpret medical images or analyze patient data. Predictive analytics powered by AI models enable healthcare providers to anticipate patient needs, optimize staffing, and allocate resources more effectively. By leveraging scalable AI, healthcare organizations can reduce costs, minimize errors, and improve patient outcomes. AI-driven tools also support medical research, accelerate drug discovery, and streamline clinical trials, paving the way for faster development of life-saving treatments. Ultimately, scalable AI solutions are empowering healthcare professionals to deliver higher-quality care and enhance the overall patient experience.

Towards Agentic and Autonomous AI: The Next Frontier in Scalability

Concept and Advantages of Agentic AI Systems

Agentic AI refers to modular, autonomous AI agents that work together and evolve in real-time, unlike traditional static AI models.

These agents offer big benefits:

- Flexibility to adapt quickly to changing data and environments

- Live governance allowing continuous oversight and adjustment

- Improved scalability by breaking down monolithic models into collaborative components

Picture your AI system like a team of specialists, each handling a part of the task, communicating constantly, and self-correcting as conditions shift.

Enterprises using agentic AI can:

- Address operational bottlenecks by distributing workloads

- Respond faster to shifts in customer behavior or market trends

- Maintain better uptime through localized self-repair

Integrating Agentic AI Within Business and Technical Ecosystems

Embedding agentic AI isn’t just plugging in new software—it requires thoughtful integration:

- Align modular agents with existing workflows and tech stacks

- Develop communication protocols for agents to share data and decisions

- Build infrastructure to support real-time data flows and autonomous decision-making

The payoff?

- Boosted organizational agility and shorter innovation cycles

- Competitive edge through continuous, adaptive AI improvements

- Reduced reliance on periodic manual retraining or full-system updates

One cutting insight: smarter AI models alone won’t solve scalability without system-level changes that enable live interaction and evolution across the AI ecosystem (The enterprise AI paradox: why smarter models alone aren't the answer).

Why Agentic AI Matters in 2025

By 2028, AI training could consume gigawatts of power; splitting intelligence into autonomous agents helps distribute resource loads more sustainably (AI training to have whopping power needs, report says).

In practice, think of agentic AI as a fleet of drones instead of one big airplane: each drone adjusts to obstacles individually but works toward the same mission.

These systems redefine scalability—it’s no longer about bigger, but smarter and faster adapting models.

Embracing agentic AI means your production AI can respond to real-world complexity with agility and resilience, rather than just brute-force computing.

Agentic AI unlocks a transformative shift in deploying scalable AI: modularity meets autonomy, enabling AI to evolve live within your business ecosystem.

This modular, adaptive approach is your path to overcoming the limits of traditional AI scaling—making complex deployments feel more like a dynamic conversation than a monolithic challenge.

Ready to turn static models into evolving collaborators? Agentic AI is where production scalability is headed next.

Conclusion

Scaling AI models in production today means embracing smarter, more flexible approaches over simply bigger and heavier systems. When you shift to modular architectures like agentic AI and invest in resilient, efficient infrastructure, you unlock real-world scalability that respects both your bottom line and operational complexity.

To move forward confidently, focus on the practices that turn challenges into competitive advantages:

- Prioritize seamless integration of AI with your existing workflows to avoid costly bottlenecks

- Implement continuous monitoring and adaptive retraining to keep models reliable as your data evolves

- Adopt energy-efficient infrastructure and cloud orchestration to balance cost, performance, and sustainability

- Build robust, security-driven architectures that can detect and respond to emerging threats in real time

- Explore agentic AI systems to distribute workloads intelligently and accelerate innovation cycles

Start today by reviewing your AI deployment’s architecture for flexibility, setting up performance monitoring, or experimenting with pruning and quantization to cut resource waste.

Scaling AI isn’t just about technology; it’s a mindset shift to dynamic, actionable growth — where your models evolve, your infrastructure adapts, and your business runs smarter every step of the way.

“Great AI at scale isn’t built overnight—it’s grown steadily through thoughtful design, relentless tuning, and fearless iteration.”

Your next breakthrough in AI scalability is waiting on the other side of action. Get out there and build it.